An open-source, standards-native agent observability backend. HFAO ingests traces through vendor-neutral OTel GenAI + OpenInference (no proprietary wire format), makes every observability primitive queryable by any MCP client, and closes the eval-trace loop in a single schema — traces become dataset items become evaluator inputs become scores become monitor triggers become traces.

Three Deployment Shapes From One Codebase

- Single-file HF Space — DuckDB embedded, the whole observatory running in one hosted notebook

- Docker Compose self-host — ClickHouse + Granian OTLP ingest, Redis Streams buffer

- Kubernetes enterprise — ClickHouse Cloud, Helm-deployed, multi-tenant

Three Pillars Commercial Competitors Can’t Easily Copy

- Standards-nativeness done right. OTel GenAI + OpenInference on ingest, full OTLP compatibility, no proprietary wire lock-in. Commercial vendors hedge this because it commoditizes their backend — HFAO has no reason to.

- MCP-native queryability. Traces, scores, causal edges, costs, prompts, datasets, experiments — all queryable by any MCP client. The observability backend agents debug themselves with.

- Closed eval-trace loop. One schema, one system. Not three SaaS products glued together.

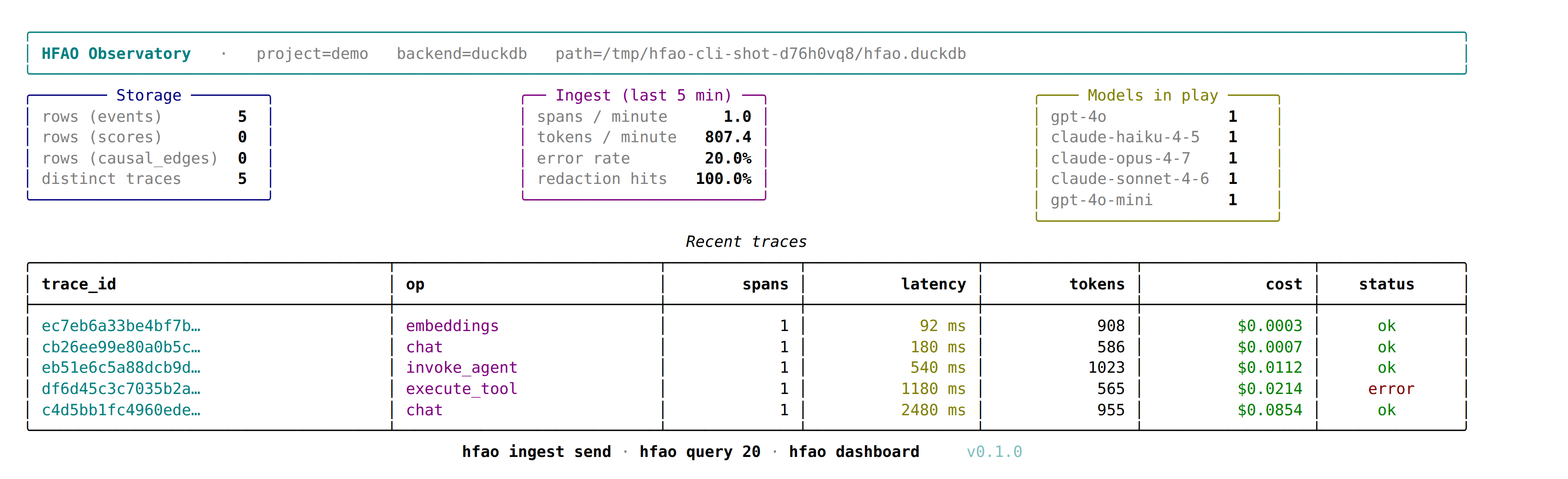

Current State

- Storage plane shipped — ClickHouse backend (§4.3 DDL, §6.1 Docker shape) and DuckDB backend with parity tests between the two

- Ingest plane shipped — OTel GenAI + OpenInference normalizer (§5), Granian OTLP server (§7.1), PII redaction (§6.5), bounded buffer with memory + Redis Streams (§7.1–§7.3), body offload at 64 KiB (§6.6)

- Acceptance harness — per-module tests (AC §5 wire, AC §6 storage, AC §7 ingest)

- Spec discipline — SPEC.md is locked at v1.0.0. Every commit cites a spec section. Silent deviation is forbidden — ambiguity gets an Open Question in §16, a proposed default, and a decision deadline

Future Directions

- MCP server surface (§9) — the queryability pillar

- Cockpit UI (Gradio 6 single-file, §10) and Console UI (SvelteKit analyst surface, §11)

- Closed eval-trace loop (§8 computation plane — causal attribution, evals, costs, monitors)

- Framework integrations (§12) and full multi-tenancy (§13)

Positioning

Parity with LangSmith / Langfuse / Phoenix / Braintrust / Weave / Helicone on tracing, datasets, evals, prompts, annotation, cost, and monitoring. Beyond them on the three pillars above.

Technical Stack

- License: Apache-2.0

- Language: Python (

hfaopackage), TypeScript (@hfao/sdk-ts,@hfao/console) - Storage: ClickHouse (self-host + enterprise), DuckDB (single-file)

- Ingest: Granian OTLP server, Redis Streams buffer, PII redaction

- Wire: OpenTelemetry GenAI + OpenInference (OTLP-compatible)

- Deploy: single-file HF Space, Docker Compose, Kubernetes (Helm)

- Container registry:

ghcr.io/f8n-ai/hfao-*

Repository README

We couldn't load this repository's README from GitHub right now. You can view it directly on GitHub instead.

View README on GitHub